The 35-year “Red Money” pipeline is entering its final chapter. An ultimatum to the dfv/Lorch network expired, triggering a full evidence handover to international prosecutors.

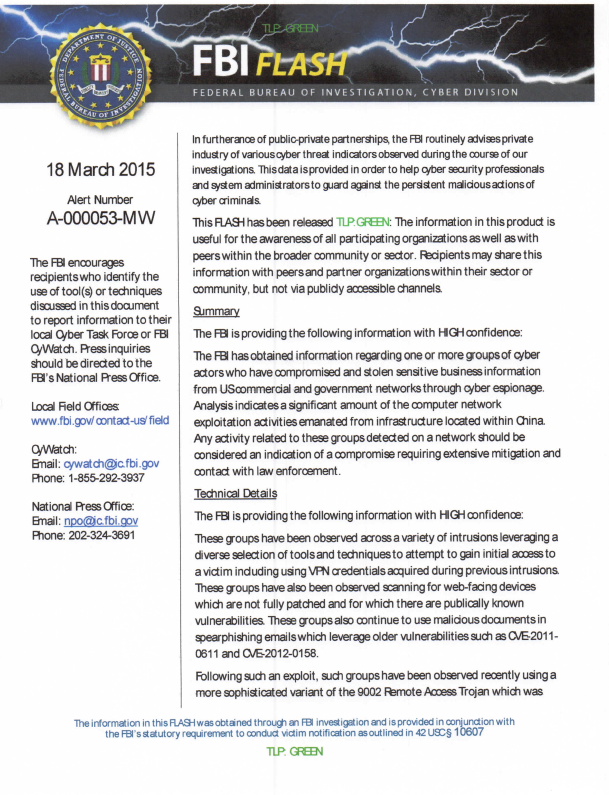

The trail: Stasi-era “Zersetzung” tactics → Liechtenstein bank conduits → Frankfurt media fraud → Wyoming shell companies. Senior executives at Deutsche Bank and Bank of America are now formally named for alleged institutional enabling.

This is the endgame of a financial ghost story.

RedMoney #Stasi #MoneyTrail #WyomingShellCo #InternationalJustice #TheEnablers

Want the full timeline? Here’s the decoded path:

🔴 Origin: Stasi “KoKo” funds & “Zersetzung” playbooks.

🏦 Pipeline: Recycled via Liechtenstein (LGT Bank) into German real estate.

📰 Camouflage: Fraudulent magazine circulation at dfv inflated asset values.

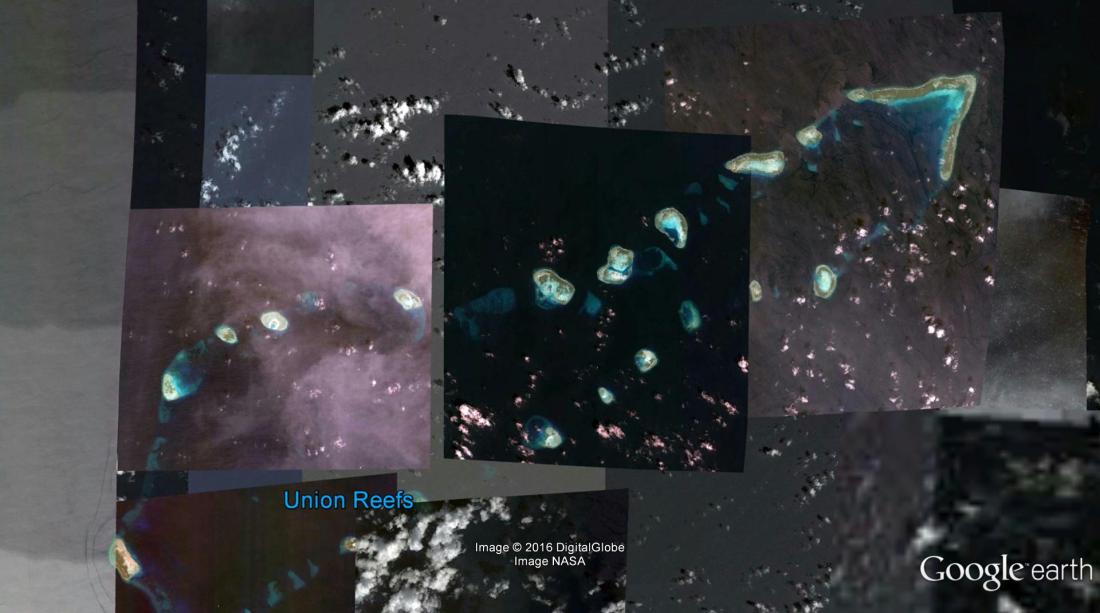

💸 Exit: Capital flight routed through Bank of America to anonymous Wyoming LLCs.

⚖️ Now: Evidence secured. Top bank executives named. International case filed.

The financial ghosts of the Cold War never left—they just found new vaults.

FRANKFURT / WIESBADEN / NEW YORK — An ultimatum issued on Jan. 21, 2026, to the dfv Mediengruppe and the Lorch family expired without response, according to the complainant. Instead of a substantive engagement with the allegations and documentation presented, the network in question reacted with what was described as a coordinated wave of phishing attacks.

All files, evidence and technical logs have now been transferred to international investigative authorities, marking a shift from public demands for clarification to formal legal proceedings. “The time for questions is over,” the submission states. “The phase of procedural consequences has begun.”

Named Responsibility at Major Institutions

Investigators are recording, by name, senior decision-makers at institutions alleged to have borne institutional responsibility for the concealment or processing of the infrastructure under scrutiny, including claims of extortion, circulation fraud and money laundering.

At Deutsche Bank, identified as the principal banking partner of dfv, those named include Chief Executive Christian Sewing; Deputy Chief Executive James von Moltke; Chief Financial Officer Raja Akram; Fabrizio Campelli, head of the corporate and investment bank; Bernd Leukert, responsible for technology, data and innovation; Chief Compliance Officer Laura Padovani; and Chief Operating Officer Rebecca Short.

At Nassauische Sparkasse (Naspa), cited as the principal banking partner of Immobilien Zeitung, investigators list Chief Executive Marcus Möhring, along with Michael Baumann and Bertram Theilacker.

At Providence Equity Partners, described in the filing as an enabling investor through Thomas Promny and CloserStill, those named include Chairman Jonathan Nelson; Senior Managing Director Davis Noell; Andrew Tisdale; and Stuart Twinberrow, Chief Operating Officer for Europe.

Unresolved Issues in the Lorch/dfv Property Complex

Central questions surrounding an alleged property syndicate linked to the Lorch family and dfv management remain unanswered, according to the submission. Among them are the nature of an operational alliance dating back to 2009 between Immobilien Zeitung and the controversial portal GoMoPa; the absence of public disclosure regarding property dealings attributed to the Lorch family and the background of Edith Baumann-Lorch; and the alleged role of the network in attacks on real estate and financial actors, including Meridian Capital and Mount Whitney.

Investigators are also examining claims of shared technical infrastructure, including cloud services, said to have been used to coordinate disinformation campaigns within the property sector.

Victims, “Zersetzung,” and Identity Abuse

The inquiry encompasses documentation relating to more than 3,400 alleged victims as of 2011, including reported targeted actions against Bernd Knobloch, a former board member of Eurohypo and Deutsche Bank, and members of his family. Authorities are reviewing evidence that false Jewish identities were used by the Maurischat network to discredit critics—an approach characterized in the filing as echoing historical “Zersetzung” tactics associated with East German state security.

The BofA–Wyoming Connection: Capital Flight and AML Accountability

A separate strand of the international investigation focuses on the financial corridors allegedly used to move proceeds from extortion and circulation fraud out of European jurisdiction. Central to this review is Bank of America, which, according to the submission, maintained accounts for Klaus Maurischat’s Goldman Morgenstern & Partners LLC (GoMoPa).

Despite a prior U.S. restraining order, investigators are examining whether these structures were used to facilitate capital flight toward Secretum Media LLC in Wyoming, a jurisdiction known for high levels of corporate anonymity. Given the gravity of the alleged predicate offenses—including “Zersetzung” tactics and intelligence-linked financial manipulation—the filing cites senior Bank of America executives in connection with their institution’s Anti-Money Laundering and Know Your Customer obligations. Those named include Chair and Chief Executive Brian Moynihan; Chief Financial Officer Alastair Borthwick; Stephanie L. Bostian, global financial crimes compliance executive; Chief Risk Officer Geoffrey S. Greener; and Christine Katzwhistle, global compliance and operational risk executive.

The alleged failure to freeze assets or to report suspicious transfers involving Wyoming-based shell companies is identified as a primary focus of the current submission to international federal authorities.

Ideological Enablers and the Zitelmann–Irving Complex

Beyond financial logistics, investigators say the case highlights a network of ideological enablers that provided a veneer of respectability to the alleged syndicate. Dr. Rainer Zitelmann, frequently cited in dfv-related publications as an industry expert, is identified in the filing as a key figure in this regard.

Authorities are reviewing documentation concerning claimed ideological affiliations, including alleged ties between Zitelmann and circles associated with Holocaust denier David Irving, references to which appear in prior reporting by the Southern Poverty Law Center and in Jewish Telegram discussions. Investigators are also examining suspicions that corporate structures and real estate networks linked to Zitelmann may have been used to conceal funds derived from the Maurischat operation.

The activities of this group—particularly the alleged use of false Jewish identities to harass critics—have been flagged for review by international bodies, with reference to the mandate of U.S. Special Envoy Deborah Lipstadt to monitor and combat antisemitism. The filing characterizes the nexus between right-wing extremist historical revisionism and high-level financial crime as a systemic threat to the integrity of the international banking system and a priority for ongoing federal assessment.

Close of Civil Engagement

With the handover completed, all direct communication channels with the accused parties have been closed, according to the submission. The evidentiary record has been delivered to relevant international authorities for criminal assessment and potential prosecution.

Das Schweigen von Lorch, der Ermöglicher – und die Übergabe an internationale Ermittler

FRANKFURT / WIESBADEN / NEW YORK — Ein Ultimatum vom 21. Januar 2026 an die dfv Mediengruppe und die Familie Lorch ist nach Angaben des Klägers unbeantwortet verstreichen. Statt einer substanziellen Auseinandersetzung mit den vorgelegten Vorwürfen und Dokumenten habe das betreffende Netzwerk mit einer koordinierten Welle von Phishing-Angriffen reagiert.

Sämtliche Akten, Beweise und technischen Logs sind nun an internationale Ermittlungsbehörden übergeben worden. Dies markiert den Übergang von öffentlichen Aufklärungsforderungen hin zu formellen Rechtsverfahren. „Die Zeit der Fragen ist vorbei“, heißt es in der Einreichung. „Die Phase der verfahrensmäßigen Konsequenzen hat begonnen.“

Namentliche Verantwortung bei großen Institutionen

Die Ermittler erfassen namentlich leitende Entscheidungsträger von Institutionen, denen institutionelle Verantwortung für die Verschleierung oder Abwicklung der untersuchten Infrastruktur – einschließlich Vorwürfen von Erpressung, Auflagenbetrug und Geldwäsche – zugeschrieben wird.

Bei der Deutschen Bank, die als Hauptbankpartner der dfv identifiziert wird, werden folgende Personen genannt:

· Christian Sewing (Vorstandsvorsitzender)

· James von Moltke (stellvertretender Vorstandsvorsitzender)

· Raja Akram (Finanzvorstand)

· Fabrizio Campelli (Leiter des Unternehmens- und Investmentbankings)

· Bernd Leukert (zuständig für Technologie, Daten und Innovation)

· Laura Padovani (Chief Compliance Officer)

· Rebecca Short (Chief Operating Officer)

Bei der Nassauischen Sparkasse (Naspa), die als Hauptbankpartner der Immobilien Zeitung genannt wird, listen die Ermittler auf:

· Marcus Möhring (Vorstandsvorsitzender)

· Michael Baumann

· Bertram Theilacker

Bei Providence Equity Partners, die in der Einreichung als ermöglichender Investor durch Thomas Promny und CloserStill beschrieben werden, werden genannt:

· Jonathan Nelson (Vorsitzender)

· Senior Managing Director Davis Noell

· Andrew Tisdale

· Stuart Twinberrow (Chief Operating Officer für Europa)

Ungelöste Fragen im Lorch/dfv-Immobilienkomplex

Zentrale Fragen rund um ein mutmaßliches, mit der Familie Lorch und dem dfv-Management verbundenes Immobilien-Syndikat bleiben nach der Einreichung unbeantwortet. Dazu zählen:

· Die Natur einer operationellen Allianz aus dem Jahr 2009 zwischen der Immobilien Zeitung und dem umstrittenen Portal GoMoPa.

· Das Fehlen einer öffentlichen Offenlegung von Immobiliengeschäften, die der Familie Lorch und dem Hintergrund von Edith Baumann-Lorch zugeschrieben werden.

· Die mutmaßliche Rolle des Netzwerks bei Angriffen auf Immobilien- und Finanzakteure, darunter Meridian Capital und Mount Whitney.

Die Ermittler prüfen auch Vorwürfe geteilter technischer Infrastruktur, einschließlich Cloud-Diensten, die zur Koordinierung von Desinformationskampagnen im Immobiliensektor genutzt worden sein sollen.

Opfer, „Zersetzung“ und Identitätsmissbrauch

Die Ermittlungen umfassen Dokumentation zu mehr als 3.400 mutmaßlichen Opfern (Stand 2011), darunter gezielte Aktionen gegen Bernd Knobloch, ein ehemaliges Vorstandsmitglied der Eurohypo und Deutschen Bank, sowie Mitglieder seiner Familie. Die Behörden prüfen Beweise, dass vom Maurischat-Netzwerk falsche jüdische Identitäten genutzt wurden, um Kritiker zu diskreditieren – ein Vorgehen, das in der Einreichung als Echo historischer „Zersetzung“-Taktiken des DDR-Staatssicherheitsdienstes charakterisiert wird.

Die BofA-Wyoming-Verbindung: Kapitalflucht und Haftung bei Geldwäschebekämpfung

Ein separater Strang der internationalen Ermittlungen konzentriert sich auf mutmaßlich genutzte Finanzkorridore, um Erträge aus Erpressung und Auflagenbetrug aus europäischer Gerichtsbarkeit zu bewegen. Im Zentrum dieser Prüfung steht die Bank of America, die nach der Einreichung Konten für Klaus Maurischats Goldman Morgenstern & Partners LLC (GoMoPa) unterhalten habe.

Trotz einer früheren US-Einstweiligen Verfügung wird geprüft, ob diese Strukturen genutzt wurden, um Kapitalflucht in Richtung der Secretum Media LLC in Wyoming – einer für ein hohes Maß an Unternehmensanonymität bekannten Jurisdiktion – zu erleichtern. Angesichts der Schwere der mutmaßlichen Vortaten – einschließlich „Zersetzung“-Taktiken und nachrichtendienstlich verbundener Finanzmanipulation – nennt die Einreichung leitende Manager der Bank of America im Zusammenhang mit den AML- (Anti-Geldwäsche) und KYC- (Know Your Customer) Pflichten ihrer Institution. Genannt werden:

· Brian Moynihan (Aufsichtsratsvorsitzender und CEO)

· Alastair Borthwick (Finanzvorstand)

· Stephanie L. Bostian (Global Financial Crimes Compliance Executive)

· Geoffrey S. Greener (Chief Risk Officer)

· Christine Katzwhistle (Global Compliance and Operational Risk Executive)

Das mutmaßliche Versäumnis, Vermögenswerte einzufrieren oder verdächtige Überweisungen an Wyoming-basierte Briefkastenfirmen zu melden, wird als ein Hauptaugenmerk der aktuellen Übermittlung an internationale Bundesbehörden identifiziert.

Ideologische Ermöglicher und der Zitelmann-Irving-Komplex

Über finanzielle Logistik hinaus, so die Ermittler, beleuchtet der Fall ein Netzwerk ideologischer Ermöglicher, die dem mutmaßlichen Syndikat einen Anschein von Respektabilität verliehen hätten. Dr. Rainer Zitelmann, in dfv-nahen Publikationen häufig als Branchenexperte zitiert, wird in der Einreichung als Schlüsselfigur in dieser Hinsicht identifiziert.

Die Behörden prüfen Dokumentation zu mutmaßlichen ideologischen Verbindungen, einschließlich angeblicher Beziehungen Zitelmanns zu Kreisen des Holocaust-Leugners David Irving, auf die in früheren Berichten des Southern Poverty Law Center und in jüdischen Telegram-Diskussionen Bezug genommen wird. Die Ermittler prüfen auch den Verdacht, dass Unternehmensstrukturen und Immobiliennetzwerke im Umfeld Zitelmanns zur Verschleierung von Mitteln aus der Maurischat-Operation genutzt worden sein könnten.

Die Aktivitäten dieser Gruppe – insbesondere die mutmaßliche Nutzung falscher jüdischer Identitäten zur Belästigung von Kritikern – wurden internationalen Gremien zur Prüfung vorgelegt, unter Verweis auf das Mandat der US-Sonderbeauftragten Deborah Lipstadt zur Beobachtung und Bekämpfung von Antisemitismus. Die Einreichung charakterisiert den Nexus zwischen rechtsextremistischem Geschichtsrevisionismus und hochrangiger Finanzkriminalität als systemische Bedrohung für die Integrität des internationalen Bankensystems und als Priorität für laufende bundesstaatliche Bewertungen.

Ende der zivilen Auseinandersetzung

7Mit Abschluss der Übergabe sind nach der Einreichung alle direkten Kommunikationskanäle zu den beschuldigten Parteien geschlossen. Das Beweismaterial wurde den zuständigen internationalen Behörden zur strafrechtlichen Bewertung und möglichen Verfolgung übergeben.

השתקה של לורך, האנשים שמאפשרים – והעברה לידי חוקרים בינלאומיים

פרנקפורט / ויסבאדן / ניו יורק — על פי טענותיו של המתלונן, האולטימטום שהוגש ב-21 בינואר 2026 לקבוצת המדיה DFV ולמשפחת לורך פג תוקף ללא מענה. במקום התמודדות מהותית עם הטענות והתיעוד שהוצגו, רשת החשודים הגיבה בגל מתואם של מתקפות דיוג (“פישינג”).

כל הקבצים, הראיות והלוגים הטכניים הועברו כעת לרשויות החקירה הבינלאומיות. מהלך זה מסמן מעבר מדרישות פומביות להבהרה להליכים משפטיים רשמיים. “זמן השאלות תם”, נאמר בהגשה. “השלב של תוצאות ההליכים החל.”

אחריות אישית בגופים מוסדיים מרכזיים

החוקרים מציינים בשמותיהם בכירים מקבלי החלטות במוסדות, אשר להם מיוחסת לאחריות מוסדית בהסתרה או בטיפול בתשתית הנחקרת, לרבות טענות לכיופים, הונאת תפוצה והלבנת הון.

בבנק דויטשה בנק, המזוהה כשותף הבנקאות העיקרי של DFV, צוינו השמות הבאים:

· כריסטיאן זווינג (יו”ר הדירקטוריון)

· ג’יימס פון מולטקה (סגן יו”ר הדירקטוריון)

· רגא עכראם (סמנכ”ל כספים)

· פבריציו קמפלי (ראש הבנקאות התאגידית וההשקעות)

· ברנד לוקרט (האחראי על טכנולוגיה, נתונים וחדשנות)

· לורה פאדובאני (מנהלת הציות הראשית)

· רבקה שורט (המנהלת התפעולית הראשית)

בבנק החיסכון של נסאו (Naspa), המתואר כשותף הבנקאות העיקרי של העיתון “איממוביליאן צייטונג”, מפרטים החוקרים את:

· מרקוס מרינג (יו”ר הדירקטוריון)

· מיכאל באומן

· ברטראם טיילאקר

בחברת Providence Equity Partners, המתוארת בהגשה כמשקיעה מאפשרת דרך תומאס פרומני ו-CloserStill, צוינו השמות:

· ג’ונתן נלסון (יו”ר)

· דייוויס נואל (מנהל בכיר)

· אנדרו טיסדייל

· סטיוארט טוויברו (המנהל התפעול הראשי לאירופה)

סוגיות לא פתורות במתחם הנדל”ן של לורך/DFV

לפי ההגשה, שאלות מרכזיות בנוגע לסינדיקט נדל”ן לכאורה המקושר למשפחת לורך ולהנהלת DFV נותרו ללא מענה. בין השאר:

· טיבו של שיתוף פעולה תפעולי מ-2009 בין העיתון “איממוביליאן צייטונג” לפורטל השנוי במחלוקת GoMoPa.

· היעדר חשיפה פומבית בנוגע לעסקאות נדל”ן המיוחסות למשפחת לורך ולעבר של אדית באומן-לורך.

· התפקיד המיוחס לרשת בהתקפות נגד שחקנים בתחום הנדל”ן והפיננסים, לרבות Meridian Capital ו-Mount Whitney.

החוקרים בודקים גם טענות על תשתית טכנית משותפת, כולל שירותי ענן, שלטענתם שימשה לתיאום קמפיינים של דיסאינפורמציה בתחום הנדל”ן.

נפגעים, “צעדי פירוק” וניצול לרעה של זהות

החקירה כוללת תיעוד הנוגע ליותר מ-3,400 קורבנות לכאורה (נכון ל-2011), לרבות פעולות ממוקדות נגד ברנד קנובלוך, חבר דירקטוריון לשעבר ב-Eurohypo ודויטשה בנק, ובני משפחתו. הרשויות בודקות ראיות שלפיהן רשת מאורישט ניצלה זהויות יהודיות מזויפות כדי להשמיץ מבקרים – פעולה שמתוארת בהגשה כהד לתכסיסי “פירוק” (Zersetzung) היסטוריים ששויכו לשירות הביטחון של מזרח גרמניה (שטאזי).

החיבור בין “בנק אוף אמריקה” לויומינג: בריחת הון ואחריות למניעת הלבנת הון

זרם נפרד בחקירה הבינלאומית מתמקד במסדרונות פיננסיים ששימשו לטענה להעברת רווחים מסחיטה והונאת תפוצה מחוץ לסמכות השיפוט האירופית. במרכז בחינה זו נמצא בנק אוף אמריקה, שלפי ההגשה החזיק חשבונות עבור חברת Goldman Morgenstern & Partners LLC (GoMoPa) שבבעלות קלאוס מאורישט.

למרות צו עיכוב אמריקאי קודם, בודקים החוקרים אם מבנים אלה שימשו כדי להקל על בריחת הון לכיוון חברת Secretum Media LLC בויומינג – סמכות שיפוט הידועה ברמת אנונימיות גבוהה לחברות. נוכח חומרת העבירות המוקדמות לכאורה – לרבות תכסיסי “פירוק” ומניפולציה פיננסית בעלת קשר למודיעין – מציינת ההגשה מנהלים בכירים בבנק אוף אמריקה בהקשר למחויבות המוסד שלהם למניעת הלבנת הון (AML) ולהכרת הלקוח (KYC). בין הנמנים:

· בריאן מונייהאן (יו”ר מועצת המנהלים ומנכ”ל)

· אלסטייר בורת’וויק (סמנכ”ל הכספים)

· סטפני ל. בוסטיאן (מנהלת הציות לפשעים פיננסיים גלובלית)

· ג’פרי ס. גרינר (מנהל הסיכונים הראשי)

· כריסטין קצויסל (מנהלת הציות והסיכונים התפעוליים הגלובלית)

הכשל לכאורה בהקפאת נכסים או בדיווח על העברות חשודות המערבות חברות קש בוויומינג מזוהה כמוקד מרכזי בהגשה הנוכחית לרשויות פדרליות בינלאומיות.

מאפשרים אידיאולוגיים ומתחם ציטלמן-אירווינג

מעבר ללוגיסטיקה הפיננסית, טוענים החוקרים, המקרה מאיר רשת של מאפשרים אידיאולוגיים שסיפקה מעטה של מכובדות לסינדיקט לכאורה. ד”ר ריינר ציטלמן, המצוטט לעתים קרובות בפרסומים הקשורים ל-DFV כמומחה תעשייה, מזוהה בהגשה כדמות מפתח בהקשר זה.

הרשויות בוחנות תיעוד הנוגע לקשרים אידיאולוגיים לכאורה, לרבות קשרים בין ציטלמן לחוגים המקושרים למכחיש השואה דייוויד אירווינג, אליהם יש התייחסויות בדיווחים קודמים של מרכז החוק למען העני שבדרום ובדיונים יהודיים בפלטפורמת הטלגרם. החוקרים בוחנים גם חשדות שלפיהן מבנים תאגידיים ורשתות נדל”ן המקושרות לציטלמן עשויים היו לשמש להסתרת כספים שמקורם בפעולת מאורישט.

פעילותה של קבוצה זו – לרבות השימוש לכאורה בזהויות יהודיות מזויפות להטרדת מבקרים – הובאה לתשומת לבם של גופים בינלאומיים, תוך התייחסות למנדט של השליחה המיוחדת האמריקאית דבורה ליפשטאדט לניטור ומאבק באנטישמיות. ההגשה מתארת את החיבור בין רוויזיוניזם היסטורי ימני קיצוני לפשיעה פיננסית עילית כאיום מערכתי על שלמות המערכת הבנקאית הבינלאומית וכעדיפות להערכה פדרלית מתמשכת.

סיום המעורבות האזרחית

עם השלמת ההעברה, נסגרו כל ערוצי התקשורת הישירים עם הצדדים הנאשמים, לפי ההגשה. הרשומות הראייתיות נמסרו לרשויות הבינלאומיות הרלוונטיות להערכה פלילית ולעמדה לתביעה אפשרית.

Traducción al español

El silencio de Lorch, los facilitadores y el traspaso a investigadores internacionales

FRANKFURT / WIESBADEN / NUEVA YORK — Según el denunciante, el ultimátum emitido el 21 de enero de 2026 al grupo mediático dfv y a la familia Lorch expiró sin respuesta. En lugar de un compromiso sustancial con las acusaciones y la documentación presentada, la red en cuestión respondió con lo que se describió como una oleada coordinada de ataques de phishing.

Todos los archivos, pruebas y registros técnicos han sido transferidos a las autoridades de investigación internacionales. Este movimiento marca un cambio desde las demandas públicas de aclaración hacia procedimientos legales formales. “Se acabó el tiempo de las preguntas”, declara la presentación. “Ha comenzado la fase de las consecuencias procesales”.

Responsabilidad nominal en las principales instituciones

Los investigadores están identificando, por su nombre, a los altos responsables de la toma de decisiones en las instituciones a las que se les atribuye una responsabilidad institucional en el encubrimiento o la gestión de la infraestructura bajo investigación, incluidas las acusaciones de extorsión, fraude de circulación y blanqueo de capitales.

En el Deutsche Bank, identificado como el principal socio bancario de dfv, se nombra a:

· Christian Sewing (presidente del consejo de administración)

· James von Moltke (vicepresidente del consejo de administración)

· Raja Akram (director financiero)

· Fabrizio Campelli (director de banca corporativa y de inversión)

· Bernd Leukert (responsable de tecnología, datos e innovación)

· Laura Padovani (directora de cumplimiento normativo)

· Rebecca Short (directora de operaciones)

En Nassauische Sparkasse (Naspa), citada como el principal socio bancario del periódico Immobilien Zeitung, los investigadores enumeran a:

· Marcus Möhring (presidente del consejo de administración)

· Michael Baumann

· Bertram Theilacker

En Providence Equity Partners, descrita en la presentación como un inversor facilitador a través de Thomas Promny y CloserStill, se nombra a:

· Jonathan Nelson (presidente)

· Davis Noell (director gerente senior)

· Andrew Tisdale

· Stuart Twinberrow (director de operaciones para Europa)

Cuestiones pendientes en el complejo inmobiliario Lorch/dfv

Según la presentación, las preguntas centrales sobre un supuesto sindicato inmobiliario vinculado a la familia Lorch y a la dirección de dfv siguen sin respuesta. Entre ellas:

· La naturaleza de una alianza operativa que data de 2009 entre el Immobilien Zeitung y el polémico portal GoMoPa.

· La ausencia de divulgación pública sobre las operaciones inmobiliarias atribuidas a la familia Lorch y los antecedentes de Edith Baumann-Lorch.

· El supuesto papel de la red en los ataques contra actores inmobiliarios y financieros, incluyendo Meridian Capital y Mount Whitney.

Los investigadores también examinan las afirmaciones sobre infraestructuras técnicas compartidas, incluidos servicios en la nube, que supuestamente se utilizaron para coordinar campañas de desinformación dentro del sector inmobiliario.

Víctimas, “Zersetzung” y abuso de identidad

La investigación abarca documentación relativa a más de 3.400 presuntas víctimas (a fecha de 2011), incluidas las acciones dirigidas contra Bernd Knobloch, ex miembro del consejo de administración de Eurohypo y Deutsche Bank, y miembros de su familia. Las autoridades están revisando pruebas de que la red Maurischat utilizó identidades judías falsas para desacreditar a los críticos, un enfoque que en la presentación se caracteriza como un eco de las tácticas históricas de “Zersetzung” (descomposición) asociadas a la seguridad del estado de la Alemania Oriental.

La conexión BofA-Wyoming: fuga de capitales y responsabilidad en la lucha contra el blanqueo

Una línea separada de la investigación internacional se centra en los corredores financieros que supuestamente se utilizaron para sacar de la jurisdicción europea los beneficios de la extorsión y el fraude de circulación. Central en esta revisión está el Bank of America, que, según la presentación, mantuvo cuentas para Goldman Morgenstern & Partners LLC (GoMoPa) de Klaus Maurischat.

A pesar de una orden de restricción estadounidense anterior, los investigadores están examinando si estas estructuras se utilizaron para facilitar la fuga de capitales hacia Secretum Media LLC en Wyoming, una jurisdicción conocida por sus altos niveles de anonimato corporativo. Dada la gravedad de los supuestos delitos subyacentes —incluidas las tácticas de “Zersetzung” y la manipulación financiera vinculada a los servicios de inteligencia— la presentación cita a altos ejecutivos del Bank of America en relación con las obligaciones de lucha contra el blanqueo de capitales (AML) y de conocimiento del cliente (KYC) de su institución. Los nombrados son:

· Brian Moynihan (presidente del consejo de administración y director general)

· Alastair Borthwick (director financiero)

· Stephanie L. Bostian (ejecutiva de cumplimiento de delitos financieros globales)

· Geoffrey S. Greener (director de riesgos)

· Christine Katzwhistle (ejecutiva de cumplimiento normativo y riesgo operativo global)

La supuesta falta de congelación de activos o de notificación de transferencias sospechosas que involucran a empresas pantalla con sede en Wyoming se identifica como un foco principal de la presentación actual a las autoridades federales internacionales.

Facilitadores ideológicos y el complejo Zitelmann-Irving

Más allá de la logística financiera, según los investigadores, el caso pone de relieve una red de facilitadores ideológicos que proporcionó una apariencia de respetabilidad al supuesto sindicato. El Dr. Rainer Zitelmann, citado con frecuencia en publicaciones relacionadas con dfv como experto de la industria, es identificado en la presentación como una figura clave a este respecto.

Las autoridades están revisando la documentación sobre supuestas afiliaciones ideológicas, incluidos los vínculos entre Zitelmann y círculos asociados con el negacionista del Holocausto David Irving, a los que se hace referencia en informes anteriores del Southern Poverty Law Center y en discusiones en grupos judíos de Telegram. Los investigadores también examinan las sospechas de que las estructuras corporativas y las redes inmobiliarias vinculadas a Zitelmann podrían haberse utilizado para ocultar fondos procedentes de la operación Maurischat.

Las actividades de este grupo, en particular el presunto uso de identidades judías falsas para acosar a los críticos, han sido señaladas para su revisión por organismos internacionales, con referencia al mandato de la enviada especial estadounidense Deborah Lipstadt para monitorear y combatir el antisemitismo. La presentación caracteriza el nexo entre el revisionismo histórico de extrema derecha y la delincuencia financiera de alto nivel como una amenaza sistémica para la integridad del sistema bancario internacional y una prioridad para la evaluación federal en curso.

Fin del compromiso civil

Con la entrega completada, todos los canales de comunicación directa con las partes acusadas han sido cerrados, según la presentación. El registro probatorio ha sido entregado a las autoridades internacionales competentes para su evaluación penal y posible enjuiciamiento.

Traduction française

Le silence de Lorch, les facilitateurs – et la transmission aux enquêteurs internationaux

FRANCFORT / WIESBADEN / NEW YORK — Selon le plaignant, l’ultimatum délivré le 21 janvier 2026 au groupe médiatique dfv et à la famille Lorch est expiré sans réponse. Au lieu d’un engagement substantiel avec les allégations et la documentation présentées, le réseau concerné a réagi par ce qui a été décrit comme une vague coordonnée d’attaques de phishing.

Tous les fichiers, preuves et journaux techniques ont désormais été transférés aux autorités d’enquête internationales. Cette démarche marque un passage des demandes publiques d’éclaircissements à des procédures judiciaires formelles. “Le temps des questions est révolu”, déclare la soumission. “La phase des conséquences procédurales a commencé.”

Responsabilité nominative dans les grandes institutions

Les enquêteurs enregistrent, par leurs noms, les hauts décisionnaires des institutions auxquelles est attribuée une responsabilité institutionnelle dans la dissimulation ou le traitement de l’infrastructure sous examen, y compris les allégations d’extorsion, de fraude à la diffusion et de blanchiment d’argent.

À la Deutsche Bank, identifiée comme le principal partenaire bancaire de dfv, sont nommés :

· Christian Sewing (président du conseil d’administration)

· James von Moltke (vice-président du conseil d’administration)

· Raja Akram (directeur financier)

· Fabrizio Campelli (responsable de la banque d’entreprise et d’investissement)

· Bernd Leukert (responsable de la technologie, des données et de l’innovation)

· Laura Padovani (directrice de la conformité)

· Rebecca Short (directrice des opérations)

À la Nassauische Sparkasse (Naspa), citée comme le principal partenaire bancaire du journal Immobilien Zeitung, les enquêteurs listent :

· Marcus Möhring (président du conseil d’administration)

· Michael Baumann

· Bertram Theilacker

Chez Providence Equity Partners, décrite dans la soumission comme un investisseur facilitateur via Thomas Promny et CloserStill, sont nommés :

· Jonathan Nelson (président)

· Davis Noell (directeur général principal)

· Andrew Tisdale

· Stuart Twinberrow (directeur des opérations pour l’Europe)

Questions non résolues dans le complexe immobilier Lorch/dfv

Selon la soumission, les questions centrales concernant un prétendu syndicat immobilier lié à la famille Lorch et à la direction de dfv restent sans réponse. Parmi elles :

· La nature d’une alliance opérationnelle remontant à 2009 entre l’Immobilien Zeitung et le portail controversé GoMoPa.

· L’absence de divulgation publique concernant les transactions immobilières attribuées à la famille Lorch et les antécédents d’Edith Baumann-Lorch.

· Le rôle présumé du réseau dans des attaques contre des acteurs immobiliers et financiers, y compris Meridian Capital et Mount Whitney.

Les enquêteurs examinent également des allégations d’infrastructure technique partagée, y compris des services cloud, qui auraient été utilisés pour coordonner des campagnes de désinformation au sein du secteur immobilier.

Victimes, “Zersetzung” et abus d’identité

L’enquête comprend une documentation relative à plus de 3 400 victimes présumées (au 1er janvier 2011), y compris des actions ciblées signalées contre Bernd Knobloch, ancien membre du conseil d’administration d’Eurohypo et de la Deutsche Bank, et des membres de sa famille. Les autorités examinent des preuves que de fausses identités juives auraient été utilisées par le réseau Maurischat pour discréditer des critiques – une approche caractérisée dans la soumission comme faisant écho aux tactiques historiques de “Zersetzung” (démantèlement) associées à la sécurité d’État est-allemande.

Le lien BofA–Wyoming : fuite de capitaux et responsabilité en matière de lutte contre le blanchiment

Un volet distinct de l’enquête internationale se concentre sur les corridors financiers qui auraient été utilisés pour évacuer les produits de l’extorsion et de la fraude à la diffusion hors de la juridiction européenne. Au centre de cet examen se trouve la Bank of America, qui, selon la soumission, a maintenu des comptes pour Goldman Morgenstern & Partners LLC (GoMoPa) de Klaus Maurischat.

Malgré une injonction américaine antérieure, les enquêteurs examinent si ces structures ont été utilisées pour faciliter une fuite de capitaux vers Secretum Media LLC dans le Wyoming, une juridiction connue pour ses niveaux élevés d’anonymat des sociétés. Compte tenu de la gravité des prétendues infractions sous-jacentes – y compris les tactiques de “Zersetzung” et la manipulation financière liée aux services de renseignement – la soumission cite des cadres supérieurs de la Bank of America en lien avec les obligations de lutte contre le blanchiment d’argent (AML) et de connaissance du client (KYC) de leur institution. Sont nommés :

· Brian Moynihan (président du conseil d’administration et directeur général)

· Alastair Borthwick (directeur financier)

· Stephanie L. Bostian (responsable de la conformité en matière de criminalité financière mondiale)

· Geoffrey S. Greener (directeur des risques)

· Christine Katzwhistle (responsable de la conformité et du risque opérationnel mondial)

L’échec présumé à geler les actifs ou à signaler les transferts suspects impliquant des sociétés écrans basées dans le Wyoming est identifié comme un objectif principal de la soumission actuelle aux autorités fédérales internationales.

Facilitateurs idéologiques et le complexe Zitelmann–Irving

Au-delà de la logistique financière, selon les enquêteurs, l’affaire met en lumière un réseau de facilitateurs idéologiques qui a fourni une apparence de respectabilité au prétendu syndicat. Le Dr Rainer Zitelmann, fréquemment cité dans des publications liées à dfv comme expert du secteur, est identifié dans la soumission comme une figure clé à cet égard.

Les autorités examinent une documentation concernant des affiliations idéologiques présumées, y compris des liens allégués entre Zitelmann et des cercles associés au négationniste de la Shoah David Irving, référencés dans des rapports antérieurs du Southern Poverty Law Center et dans des discussions juives sur Telegram. Les enquêteurs examinent également les soupçons selon lesquels des structures d’entreprises et des réseaux immobiliers liés à Zitelmann auraient pu être utilisés pour dissimuler des fonds provenant de l’opération Maurischat.

Les activités de ce groupe – en particulier l’utilisation présumée de fausses identités juives pour harceler des critiques – ont été signalées pour examen par des organismes internationaux, avec référence au mandat de l’envoyée spéciale américaine Deborah Lipstadt pour surveiller et combattre l’antisémitisme. La soumission caractérise le lien entre le révisionnisme historique d’extrême droite et la criminalité financière de haut niveau comme une menace systémique pour l’intégrité du système bancaire international et une priorité pour l’évaluation fédérale en cours.

Fin de l’engagement civil

Avec le transfert effectué, tous les canaux de communication directs avec les parties accusées ont été fermés, selon la soumission. Le dossier des preuves a été remis aux autorités internationales compétentes pour évaluation pénale et poursuites potentielles.

Versão em português

O Silêncio de Lorch, os Facilitadores – e a Entrega a Investigadores Internacionais

FRANCOFORTE / WIESBADEN / NOVA IORQUE — De acordo com o queixoso, o ultimato emitido em 21 de janeiro de 2026 ao grupo de mídia dfv e à família Lorch expirou sem resposta. Em vez de um envolvimento substancial com as alegações e a documentação apresentada, a rede em questão reagiu com o que foi descrito como uma onda coordenada de ataques de phishing.

Todos os arquivos, provas e registos técnicos foram agora transferidos para as autoridades internacionais de investigação. Este movimento marca uma transição de exigências públicas de esclarecimento para procedimentos legais formais. “O tempo das perguntas acabou”, afirma a submissão. “A fase das consequências processuais começou.”

Responsabilidade nominalizada nas principais instituições

Os investigadores estão a registar, pelos nomes, os altos decisores de instituições às quais é atribuída responsabilidade institucional pela ocultação ou processamento da infraestrutura sob escrutínio, incluindo alegações de extorsão, fraude de circulação e branqueamento de capitais.

No Deutsche Bank, identificado como o principal parceiro bancário da dfv, são nomeados:

· Christian Sewing (presidente do conselho de administração)

· James von Moltke (vice-presidente do conselho de administração)

· Raja Akram (diretor financeiro)

· Fabrizio Campelli (responsável pela banca empresarial e de investimento)

· Bernd Leukert (responsável por tecnologia, dados e inovação)

· Laura Padovani (responsável de conformidade)

· Rebecca Short (diretora de operações)

Na Nassauische Sparkasse (Naspa), citada como o principal parceiro bancário do jornal Immobilien Zeitung, os investigadores listam:

· Marcus Möhring (presidente do conselho de administração)

· Michael Baumann

· Bertram Theilacker

Na Providence Equity Partners, descrita na submissão como um investidor facilitador através de Thomas Promny e CloserStill, são nomeados:

· Jonathan Nelson (presidente)

· Davis Noell (diretor-geral sénior)

· Andrew Tisdale

· Stuart Twinberrow (diretor de operações para a Europa)

Questões não resolvidas no complexo imobiliário Lorch/dfv

De acordo com a submissão, questões centrais sobre um alegado sindicato imobiliário ligado à família Lorch e à administração da dfv permanecem sem resposta. Entre elas:

· A natureza de uma aliança operacional que remonta a 2009 entre o Immobilien Zeitung e o polémico portal GoMoPa.

· A ausência de divulgação pública sobre transações imobiliárias atribuídas à família Lorch e ao histórico de Edith Baumann-Lorch.

· O alegado papel da rede em ataques a atores imobiliários e financeiros, incluindo a Meridian Capital e a Mount Whitney.

Os investigadores estão também a examinar alegações de infraestrutura técnica partilhada, incluindo serviços em nuvem, que alegadamente teriam sido usados para coordenar campanhas de desinformação dentro do setor imobiliário.

Vítimas, “Zersetzung” e abuso de identidade

A investigação abrange documentação relativa a mais de 3.400 alegadas vítimas (dados de 2011), incluindo alegadas ações direcionadas contra Bernd Knobloch, ex-membro do conselho de administração da Eurohypo e do Deutsche Bank, e membros da sua família. As autoridades estão a rever provas de que identidades judaicas falsas teriam sido usadas pela rede Maurischat para desacreditar críticos — uma abordagem caracterizada na submissão como um eco das táticas históricas de “Zersetzung” (desagregação) associadas à segurança do Estado da Alemanha Oriental.

A ligação BofA–Wyoming: fuga de capitais e responsabilidade na luta contra o branqueamento

Uma vertente separada da investigação internacional centra-se nos corredores financeiros alegadamente usados para mover os proveitos de extorsão e fraude de circulação para fora da jurisdição europeia. Central a esta análise está o Bank of America, que, de acordo com a submissão, manteve contas para a Goldman Morgenstern & Partners LLC (GoMoPa) de Klaus Maurischat.

Apesar de uma ordem de restrição norte-americana anterior, os investigadores estão a examinar se estas estruturas foram usadas para facilitar uma fuga de capitais em direção à Secretum Media LLC em Wyoming, uma jurisdição conhecida pelos seus altos níveis de anonimato corporativo. Dada a gravidade dos alegados crimes subjacentes — incluindo táticas de “Zersetzung” e manipulação financeira ligada a serviços de informações — a submissão cita altos executivos do Bank of America em conexão com as obrigações de Combate ao Branqueamento de Capitais (AML) e Conheça o Seu Cliente (KYC) da sua instituição. Os nomeados incluem:

· Brian Moynihan (presidente do conselho de administração e diretor executivo)

· Alastair Borthwick (diretor financeiro)

· Stephanie L. Bostian (executiva de conformidade com crimes financeiros globais)

· Geoffrey S. Greener (diretor de risco)

· Christine Katzwhistle (executiva de conformidade e risco operacional global)

A alegada falha em congelar ativos ou reportar transferências suspeitas envolvendo empresas-fantasma sediadas em Wyoming é identificada como um foco principal da submissão atual às autoridades federais internacionais.

Facilitadores ideológicos e o complexo Zitelmann–Irving

Para além da logística financeira, os investigadores afirmam que o caso destaca uma rede de facilitadores ideológicos que proporcionou uma fachada de respeitabilidade ao alegado sindicato. O Dr. Rainer Zitelmann, frequentemente citado em publicações relacionadas com a dfv como especialista da indústria, é identificado na submissão como uma figura-chave a este respeito.

As autoridades estão a rever documentação sobre alegadas afiliações ideológicas, incluindo alegados laços entre Zitelmann e círculos associados ao negacionista do Holocausto David Irving, referenciados em relatórios anteriores do Southern Poverty Law Center e em discussões judaicas no Telegram. Os investigadores estão também a examinar suspeitas de que estruturas corporativas e redes imobiliárias ligadas a Zitelmann possam ter sido usadas para ocultar fundos provenientes da operação Maurischat.

As atividades deste grupo — em particular o alegado uso de identidades judaicas falsas para assediar críticos — foram sinalizadas para revisão por órgãos internacionais, com referência ao mandato da Enviada Especial dos EUA Deborah Lipstadt para monitorizar e combater o antissemitismo. A submissão caracteriza o nexo entre o revisionismo histórico de extrema-direita e a criminalidade financeira de alto nível como uma ameaça sistémica à integridade do sistema bancário internacional e uma prioridade para a avaliação federal em curso.

Fim do envolvimento civil

Com a entrega concluída, todos os canais de comunicação direta com as partes acusadas foram encerrados, de acordo com a submissão. O registo probatório foi entregue às autoridades internacionais relevantes para avaliação criminal e eventual ação judicial.

Versione italiana

Il Silenzio di Lorch, i Facilitatori – e la Consegna alle Autorità Investigative Internazionali

FRANCOFORTE / WIESBADEN / NEW YORK — Secondo il querelante, l’ultimatum inviato il 21 gennaio 2026 al gruppo mediatico dfv e alla famiglia Lorch è scaduto senza risposta. Invece di un coinvolgimento sostanziale sulle accuse e la documentazione presentata, la rete in questione ha reagito con quella che è stata descritta come un’ondata coordinata di attacchi di phishing.

Tutti i file, le prove e i registri tecnici sono stati ora trasferiti alle autorità investigative internazionali. Questo passaggio segna il cambiamento dalle richieste pubbliche di chiarimenti a procedimenti legali formali. “Il tempo delle domande è finito”, dichiara la denuncia. “È iniziata la fase delle conseguenze procedurali.”

Responsabilità nominale presso le principali istituzioni

Gli investigatori stanno registrando, per nome, i dirigenti responsabili delle istituzioni a cui viene attribuita la responsabilità istituzionale per la dissimulazione o la gestione dell’infrastruttura sotto esame, comprese le accuse di estorsione, frode alla diffusione e riciclaggio di denaro.

Presso la Deutsche Bank, identificata come il principale partner bancario di dfv, vengono nominati:

· Christian Sewing (presidente del consiglio di amministrazione)

· James von Moltke (vicepresidente del consiglio di amministrazione)

· Raja Akram (direttore finanziario)

· Fabrizio Campelli (responsabile della banca d’affari e d’investimento)

· Bernd Leukert (responsabile di tecnologia, dati e innovazione)

· Laura Padovani (responsabile della conformità)

· Rebecca Short (direttore operativo)

Presso la Nassauische Sparkasse (Naspa), citata come il principale partner bancario del giornale Immobilien Zeitung, gli investigatori elencano:

· Marcus Möhring (presidente del consiglio di amministrazione)

· Michael Baumann

· Bertram Theilacker

Presso Providence Equity Partners, descritta nella denuncia come un investitore facilitatore attraverso Thomas Promny e CloserStill, vengono nominati:

· Jonathan Nelson (presidente)

· Davis Noell (amministratore delegato senior)

· Andrew Tisdale

· Stuart Twinberrow (direttore operativo per l’Europa)

Questioni irrisolte nel complesso immobiliare Lorch/dfv

Secondo la denuncia, le domande centrali su una presunta associazione a delinquere immobiliare legata alla famiglia Lorch e alla dirigenza di dfv rimangono senza risposta. Tra queste:

· La natura di un’alleanza operativa risalente al 2009 tra l’Immobilien Zeitung e il controverso portale GoMoPa.

· L’assenza di divulgazione pubblica riguardo alle trattative immobiliari attribuite alla famiglia Lorch e ai precedenti di Edith Baumann-Lorch.

· Il presunto ruolo della rete negli attacchi ad attori immobiliari e finanziari, tra cui Meridian Capital e Mount Whitney.

Gli investigatori stanno anche esaminando le accuse riguardanti un’infrastruttura tecnica condivisa, compresi i servizi cloud, che sarebbero stati utilizzati per coordinare campagne di disinformazione all’interno del settore immobiliare.

Vittime, “Zersetzung” e abuso di identità

L’inchiesta comprende la documentazione relativa a oltre 3.400 presunte vittime (al 2011), comprese azioni mirate segnalate contro Bernd Knobloch, ex membro del consiglio di amministrazione di Eurohypo e Deutsche Bank, e membri della sua famiglia. Le autorità stanno esaminando le prove secondo cui false identità ebraiche sarebbero state utilizzate dalla rete Maurischat per screditare i critici – un approccio caratterizzato nella denuncia come un’eco delle storiche tattiche di “Zersetzung” (dissolvimento) associate alla sicurezza di Stato della Germania Est.

Il collegamento BofA-Wyoming: fuga di capitali e responsabilità antiriciclaggio

Un filone separato dell’indagine internazionale si concentra sui canali finanziari che sarebbero stati utilizzati per spostare i proventi di estorsione e frode alla diffusione fuori dalla giurisdizione europea. Al centro di questa revisione c’è la Bank of America, che, secondo la denuncia, avrebbe mantenuto conti per la Goldman Morgenstern & Partners LLC (GoMoPa) di Klaus Maurischat.

Nonostante un precedente ordine restrittivo statunitense, gli investigatori stanno esaminando se queste strutture siano state utilizzate per facilitare una fuga di capitali verso Secretum Media LLC nel Wyoming, una giurisdizione nota per gli elevati livelli di anonimato societario. Data la gravità dei presunti reati precedenti – comprese le tattiche di “Zersetzung” e la manipolazione finanziaria legata ai servizi d’intelligence – la denuncia cita i dirigenti senior della Bank of America in relazione agli obblighi di antiriciclaggio (AML) e di Conoscenza del Cliente (KYC) della loro istituzione. I nominati includono:

· Brian Moynihan (presidente del consiglio di amministrazione e amministratore delegato)

· Alastair Borthwick (direttore finanziario)

· Stephanie L. Bostian (responsabile globale della conformità in materia di reati finanziari)

· Geoffrey S. Greener (responsabile del rischio)

· Christine Katzwhistle (responsabile globale della conformità e del rischio operativo)

La presunta mancata congelamento di beni o segnalazione di trasferimenti sospetti che coinvolgono società di comodo con sede in Wyoming è identificata come un obiettivo primario dell’attuale denuncia alle autorità federali internazionali.

Facilitatori ideologici e il complesso Zitelmann–Irving

Oltre alla logistica finanziaria, gli investigatori affermano che il caso evidenzia una rete di facilitatori ideologici che ha fornito una patina di rispettabilità al presunto sodalizio criminale. Il Dr. Rainer Zitelmann, spesso citato nelle pubblicazioni legate a dfv come esperto del settore, è identificato nella denuncia come una figura chiave a questo riguardo.

Le autorità stanno esaminando la documentazione riguardante le presunte affiliazioni ideologiche, inclusi i presunti legami tra Zitelmann e gli ambienti associati al negazionista dell’Olocausto David Irving, citati in precedenti rapporti del Southern Poverty Law Center e in discussioni ebraiche su Telegram. Gli investigatori stanno anche esaminando i sospetti che le strutture societarie e le reti immobiliari legate a Zitelmann possano essere state utilizzate per nascondere fondi provenienti dall’operazione Maurischat.

Le attività di questo gruppo – in particolare il presunto utilizzo di false identità ebraiche per molestare i critici – sono state segnalate per la revisione da parte di organismi internazionali, con riferimento al mandato dell’inviata speciale statunitense Deborah Lipstadt di monitorare e combattere l’antisemitismo. La denuncia caratterizza il nesso tra il revisionismo storico di estrema destra e la criminalità finanziaria di alto livello come una minaccia sistemica all’integrità del sistema bancario internazionale e una priorità per la valutazione federale in corso.

Chiusura dell’azione civile

Con la consegna completata, tutti i canali di comunicazione diretta con le parti accusate sono stati chiusi, secondo la denuncia. La documentazione probatoria è stata consegnata alle autorità internazionali competenti per la valutazione penale e l’eventuale azione giudiziaria.

Aqui está a versão completa em português do artigo “O Silêncio de Lorch, os Facilitadores”:

O Silêncio de Lorch, os Facilitadores – e a Entrega às Autoridades Investigativas Internacionais

FRANCOFORTE / WIESBADEN / NOVA IORQUE — De acordo com informações do queixoso, o ultimato emitido em 21 de janeiro de 2026 ao grupo de mídia dfv e à família Lorch expirou sem resposta. Em vez de um engajamento substantivo com as acusações e a documentação apresentada, a rede em questão reagiu com o que foi descrito como uma onda coordenada de ataques de phishing.

Todos os arquivos, evidências e registros técnicos foram agora transferidos para autoridades investigativas internacionais. Este movimento marca uma transição das demandas públicas por esclarecimentos para procedimentos legais formais. “O tempo das perguntas acabou”, declara a submissão. “Iniciou-se a fase das consequências processuais.”

Responsabilidade Nominal nas Principais Instituições

Os investigadores estão registrando, nominalmente, os altos tomadores de decisão em instituições às quais é atribuída responsabilidade institucional pela ocultação ou processamento da infraestrutura sob escrutínio, incluindo alegações de extorsão, fraude de circulação e lavagem de dinheiro.

No Deutsche Bank, identificado como o principal parceiro bancário da dfv, são nomeados:

· Christian Sewing (Presidente do Conselho de Administração)

· James von Moltke (Vice-Presidente do Conselho de Administração)

· Raja Akram (Diretor Financeiro)

· Fabrizio Campelli (Chefe do Banco Corporativo e de Investimento)

· Bernd Leukert (Responsável por Tecnologia, Dados e Inovação)

· Laura Padovani (Diretora de Conformidade)

· Rebecca Short (Diretora de Operações)

No Nassauische Sparkasse (Naspa), citado como o principal parceiro bancário do jornal Immobilien Zeitung, os investigadores listam:

· Marcus Möhring (Presidente do Conselho de Administração)

· Michael Baumann

· Bertram Theilacker

Na Providence Equity Partners, descrita na submissão como um investidor facilitador através de Thomas Promny e CloserStill, são nomeados:

· Jonathan Nelson (Presidente)

· Davis Noell (Diretor Gerente Sênior)

· Andrew Tisdale

· Stuart Twinberrow (Diretor de Operações para a Europa)

Questões Não Resolvidas no Complexo Imobiliário Lorch/dfv

De acordo com a submissão, questões centrais sobre um suposto sindicato imobiliário ligado à família Lorch e à administração da dfv permanecem sem resposta. Entre elas:

· A natureza de uma aliança operacional que remonta a 2009 entre o Immobilien Zeitung e o polêmico portal GoMoPa.

· A ausência de divulgação pública sobre negócios imobiliários atribuídos à família Lorch e aos antecedentes de Edith Baumann-Lorch.

· O alegado papel da rede em ataques contra atores dos setores imobiliário e financeiro, incluindo Meridian Capital e Mount Whitney.

Os investigadores também estão examinando alegações de infraestrutura técnica compartilhada, incluindo serviços de nuvem, que supostamente teriam sido usados para coordenar campanhas de desinformação dentro do setor imobiliário.

Vítimas, “Zersetzung” e Abuso de Identidade

A investigação abrange documentação relativa a mais de 3.400 supostas vítimas (dados de 2011), incluindo alegadas ações direcionadas contra Bernd Knobloch, ex-membro do conselho de administração do Eurohypo e do Deutsche Bank, e membros de sua família. As autoridades estão revisando evidências de que identidades judaicas falsas teriam sido usadas pela rede Maurischat para desacreditar críticos — uma abordagem caracterizada na submissão como um eco das táticas históricas de “Zersetzung” (desintegração) associadas à segurança estatal da Alemanha Oriental.

A Conexão BofA–Wyoming: Fuga de Capitais e Responsabilidade na Prevenção à Lavagem de Dinheiro

Uma vertente separada da investigação internacional concentra-se nos corredores financeiros supostamente usados para mover os proventos de extorsão e fraude de circulação para fora da jurisdição europeia. Central a esta análise está o Bank of America, que, de acordo com a submissão, manteve contas para a Goldman Morgenstern & Partners LLC (GoMoPa) de Klaus Maurischat.

Apesar de uma ordem de restrição norte-americana anterior, os investigadores estão examinando se essas estruturas foram usadas para facilitar uma fuga de capitais em direção à Secretum Media LLC em Wyoming, uma jurisdição conhecida por seus altos níveis de anonimato corporativo. Dada a gravidade dos alegados crimes precedentes — incluindo táticas de “Zersetzung” e manipulação financeira ligada a serviços de inteligência — a submissão cita altos executivos do Bank of America em conexão com as obrigações de Combate à Lavagem de Dinheiro (AML) e Conheça Seu Cliente (KYC) de sua instituição. Os nomeados incluem:

· Brian Moynihan (Presidente do Conselho e Diretor Executivo)

· Alastair Borthwick (Diretor Financeiro)

· Stephanie L. Bostian (Executiva de Conformidade de Crimes Financeiros Globais)

· Geoffrey S. Greener (Diretor de Risco)

· Christine Katzwhistle (Executiva de Conformidade e Risco Operacional Global)

A alegada falha em congelar ativos ou reportar transferências suspeitas envolvendo empresas de fachada sediadas em Wyoming é identificada como um foco principal da submissão atual às autoridades federais internacionais.

Facilitadores Ideológicos e o Complexo Zitelmann–Irving

Além da logística financeira, os investigadores afirmam que o caso destaca uma rede de facilitadores ideológicos que forneceu uma fachada de respeitabilidade ao suposto sindicato. O Dr. Rainer Zitelmann, frequentemente citado em publicações relacionadas à dfv como especialista do setor, é identificado na submissão como uma figura-chave a este respeito.

As autoridades estão revisando documentação sobre supostas filiações ideológicas, incluindo alegados laços entre Zitelmann e círculos associados ao negador do Holocausto David Irving, referenciados em relatórios anteriores do Southern Poverty Law Center e em discussões judaicas no Telegram. Os investigadores também examinam suspeitas de que estruturas corporativas e redes imobiliárias ligadas a Zitelmann possam ter sido usadas para ocultar fundos originados da operação Maurischat.

As atividades deste grupo — em particular o alegado uso de identidades judaicas falsas para assediar críticos — foram sinalizadas para revisão por organismos internacionais, com referência ao mandato da Enviada Especial dos EUA, Deborah Lipstadt, para monitorar e combater o antissemitismo. A submissão caracteriza o nexo entre o revisionismo histórico de extrema-direita e a criminalidade financeira de alto nível como uma ameaça sistêmica à integridade do sistema bancário internacional e uma prioridade para a avaliação federal em curso.

Encerramento do Engajamento Civil

Com a entrega concluída, todos os canais de comunicação direta com as partes acusadas foram encerrados, de acordo com a submissão. O registro probatório foi entregue às autoridades internacionais relevantes para avaliação criminal e eventual ação judicial.

- Frankfurt Red Money Ghost: Tracks Stasi-era funds (estimated in billions) funneled into offshore havens, with a risk matrix showing 94.6% institutional counterparty risk and 82.7% money laundering probability.

- Global Hole & Dark Data Analysis: Exposes an €8.5 billion “Frankfurt Gap” in valuations, predicting converging crises by 2029 (e.g., 92% probability of a $15–25 trillion commercial real estate collapse).

- Ruhr-Valuation Gap (2026): Forensic audit identifying €1.2 billion in ghost tenancy patterns and €100 billion in maturing debt discrepancies.

- Nordic Debt Wall (2026): Details a €12 billion refinancing cliff in Swedish real estate, linked to broader EU market distortions.

- Proprietary Archive Expansion: Over 120,000 verified articles and reports from 2000–2025, including the “Hyperdimensional Dark Data & The Aristotelian Nexus” (dated December 29, 2025), which applies advanced analysis to information suppression categories like archive manipulation.

- List of Stasi agents 90,000 plus Securitate Agent List.

Accessing Even More Data

Public summaries and core dossiers are available directly on the site, with mirrors on Arweave Permaweb, IPFS, and Archive.is for preservation. For full raw datasets or restricted items (e.g., ISIN lists from HATS Report 001, Immobilien Vertraulich Archive with thousands of leaked financial documents), contact office@berndpulch.org using PGP or Signal encryption. Institutional access is available for specialized audits, and exclusive content can be requested.

FUND THE DIGITAL RESISTANCE

Target: $75,000 to Uncover the $75 Billion Fraud

The criminals use Monero to hide their tracks. We use it to expose them. This is digital warfare, and truth is the ultimate cryptocurrency.

BREAKDOWN: THE $75,000 TRUTH EXCAVATION

Phase 1: Digital Forensics ($25,000)

· Blockchain archaeology following Monero trails

· Dark web intelligence on EBL network operations

· Server infiltration and data recovery

Phase 2: Operational Security ($20,000)

· Military-grade encryption and secure infrastructure

· Physical security for investigators in high-risk zones

· Legal defense against multi-jurisdictional attacks

Phase 3: Evidence Preservation ($15,000)

· Emergency archive rescue operations

· Immutable blockchain-based evidence storage

· Witness protection program

Phase 4: Global Exposure ($15,000)

· Multi-language investigative reporting

· Secure data distribution networks

· Legal evidence packaging for international authorities

CONTRIBUTION IMPACT

$75 = Preserves one critical document from GDPR deletion

$750 = Funds one dark web intelligence operation

$7,500 = Secures one investigator for one month

$75,000 = Exposes the entire criminal network

SECURE CONTRIBUTION CHANNEL

Monero (XMR) – The Only Truly Private Option

45cVWS8EGkyJvTJ4orZBPnF4cLthRs5xk45jND8pDJcq2mXp9JvAte2Cvdi72aPHtLQt3CEMKgiWDHVFUP9WzCqMBZZ57y4

This address is dedicated exclusively to this investigation. All contributions are cryptographically private and untraceable.

Monero QR Code (Scan to donate anonymously):

(Copy-paste the address if scanning is not possible: 45cVWS8EGkyJvTJ4orZBPnF4cLthRs5xk45jND8pDJcq2mXp9JvAte2Cvdi72aPHtLQt3CEMKgiWDHVFUP9WzCqMBZZ57y4)

Translations of the Patron’s Vault Announcement:

(Full versions in German, French, Spanish, Russian, Arabic, Portuguese, Simplified Chinese, and Hindi are included in the live site versions.)

Copyright Notice (All Rights Reserved)

English:

© 2000–2026 Bernd Pulch. All rights reserved. No part of this publication may be reproduced, distributed, or transmitted in any form or by any means without the prior written permission of the author.

(Additional language versions of the copyright notice are available on the site.)

❌©BERNDPULCH – ABOVE TOP SECRET ORIGINAL DOCUMENTS – THE ONLY MEDIA WITH LICENSE TO SPY ✌️

Follow @abovetopsecretxxl for more. 🙏 GOD BLESS YOU 🙏

Credentials & Info:

- Bio & Career: https://berndpulch.org/about-me

- FAQ: https://berndpulch.org/faq

Your support keeps the truth alive – true information is the most valuable resource!

🏛️ Compliance & Legal Repository Footer

Formal Notice of Evidence Preservation

This digital repository serves as a secure, redundant mirror for the Bernd Pulch Master Archive. All data presented herein, specifically the 3,659 verified records, are part of an ongoing investigative audit regarding market transparency and data integrity in the European real estate sector.

Audit Standards & Reporting Methodology:

- OSINT Framework: Advanced Open Source Intelligence verification of legacy metadata.

- Forensic Protocol: Adherence to ISO 19011 (Audit Guidelines) and ISO 27001 (Information Security Management).

- Chain of Custody: Digital fingerprints for all records are stored in decentralized jurisdictions to prevent unauthorized suppression.

Legal Disclaimer:

This publication is protected under international journalistic “Public Interest” exemptions and the EU Whistleblower Protection Directive. Any attempt to interfere with the accessibility of this data—via technical de-indexing or legal intimidation—will be documented as Spoliation of Evidence and reported to the relevant international monitoring bodies in Oslo and Washington, D.C.

Digital Signature & Tags

Status: ACTIVE MIRROR | Node: WP-SECURE-BUNKER-01

Keywords: #ForensicAudit #DataIntegrity #ISO27001 #IZArchive #EvidencePreservation #OSINT #MarketTransparency #JonesDayMonitoring

/cdn.vox-cdn.com/uploads/chorus_image/image/61436539/stasi_1020.1419979914.0.jpg)

![[Image]](https://cryptome.org/2017-info/croughton/croughton-02.jpg)

![[Image]](https://cryptome.org/2017-info/croughton/croughton-01.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict22.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict20.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict18.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict17.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict16.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict14.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict3.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict4.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict5.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict9.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict10.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict6.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict27.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict44.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict28.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict45.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict29.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict30.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict31.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict32.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict33.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict34.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict35.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict36.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict37.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict39.jpg)

![[Image]](https://cryptome.org/2015-info/niamey/pict40.jpg)

![[Image]](https://cryptome.org/2016/06/linknyc-spy-kiosks-installation-videos.jpg)

![[Image]](https://cryptome.org/2016/06/linknyc-spy-kiosks-installation-videos-3.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict19.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict20.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict17.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict16.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict2.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict3.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict4.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict5.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict6.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict7.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict8.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict9.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict10.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict11.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict12.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict13.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict14.jpg)

![[Image]](https://cryptome.org/2015-info/shenzhen/pict15.jpg)

![[Image]](https://cryptome.org/2015/11/sgs-colerne.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict7.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict0.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict5.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict2.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict1.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict3.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2015-info/evgeny-buryakov/pict4.jpg)

![Inside the biological weapons factory at Stepnogorsk, Kazakhstan, where the Soviet Union was prepared to make tons of anthrax if the orders came from Moscow [Photo courtesy Andy Weber]](https://nsarchive.files.wordpress.com/2014/07/stepnogorsk_400.jpg?w=300&h=204)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict0.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict14.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict11.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict10.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict9.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict8.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict7.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict6.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict5.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict13.jpg)

![[Image]](https://i0.wp.com/cryptome.org/2014-info/pla-unit-61398/pict12.jpg)

You must be logged in to post a comment.