By Bernd Pulch | February 13, 2026 | Category: Media Control

In state legislatures across America, a quiet transformation has been underway. Not through dramatic floor fights or national headlines, but through the incremental work of bill drafting, committee hearings, and floor votes, a coordinated campaign to reshape American education has achieved what would have seemed impossible just a decade ago.

The numbers tell the story: more than 70 bills introduced in 26 states restricting what can be taught in classrooms, how teachers can discuss controversial topics, and which books can remain on library shelves. Of these, 22 bills became law in 16 states—a legislative onslaught without modern precedent.

But the laws themselves tell only part of the story. Behind each bill lies a broader movement: an organized, well-funded campaign to transform American education from an institution devoted to inquiry and critical thinking into one devoted to political conformity and ideological enforcement. Understanding this movement—its origins, its methods, and its goals—is essential for anyone concerned about the future of education in a democratic society.

The Numbers: A Record-Breaking Year for Censorship

When historians look back on this period, they will note the sheer scale of legislative activity as remarkable. More than 70 bills in a single year represents an unprecedented level of focus on educational content. To put this in perspective, between 2000 and 2020, the average number of such bills introduced annually was fewer than ten.

The geographic spread is equally striking. These are not isolated efforts in a few conservative strongholds. From Florida to Idaho, from Texas to New Hampshire, legislation has advanced in states across every region of the country. Red states lead the way, but purple states have followed, and even blue states have seen significant legislative efforts, though fewer have become law.

The content of these bills varies, but common themes emerge. Most target discussions of race and racism, restricting or prohibiting the teaching of concepts collectively labeled “critical race theory”—a term that has become a catch-all for any discussion of systemic racism in American history. Others target gender and sexuality, limiting discussions of LGBTQ+ topics or requiring parental notification before such discussions can occur. Still others focus on “divisive concepts” more broadly, prohibiting instruction that might cause students to feel discomfort or guilt about their race or gender.

The speed of legislative action has surprised even veteran observers. Bills introduced in January have become law by summer. The normal deliberative processes—hearings, amendments, debate—have been compressed or bypassed entirely. Supporters argue that urgency is warranted; critics see a deliberate strategy to avoid scrutiny.

What These Bills Actually Do

Understanding the impact of this legislation requires moving beyond rhetoric to examine what the laws actually do. While each state’s approach differs, several common mechanisms have emerged.

Curriculum restrictions are the most direct form of control. These provisions specify what teachers may and may not say in classrooms, often in remarkably detailed terms. Florida’s Individual Freedom Act, for example, prohibits instruction that any individual is “inherently racist, sexist, or oppressive, whether consciously or unconsciously.” Similar language appears in laws from Oklahoma to Tennessee.

The effect on classroom practice has been immediate. Teachers report removing books from classroom libraries, abandoning lesson plans that have been taught for years, and avoiding topics that might trigger complaints. This self-censorship, while difficult to measure, may be the most significant effect of the new laws. When teachers are uncertain about what is permitted, the safest course is to teach nothing that could be controversial.

Professional development restrictions limit what teachers can learn about their own profession. Several states now prohibit school districts from using funds for professional development that addresses “divisive concepts.” This means teachers cannot be trained in culturally responsive pedagogy, implicit bias, or inclusive teaching practices—even if they seek such training voluntarily.

The irony is striking: laws purportedly designed to protect students from indoctrination instead prevent teachers from learning how to serve diverse student populations effectively. The students most likely to be affected are those who need skilled, culturally competent teachers most urgently.

Library book restrictions have drawn the most public attention. In state after state, legislation has made it easier for parents or community members to challenge books and demand their removal from school libraries. Some laws require libraries to create formal review processes; others simply make it easier to file complaints and demand action.

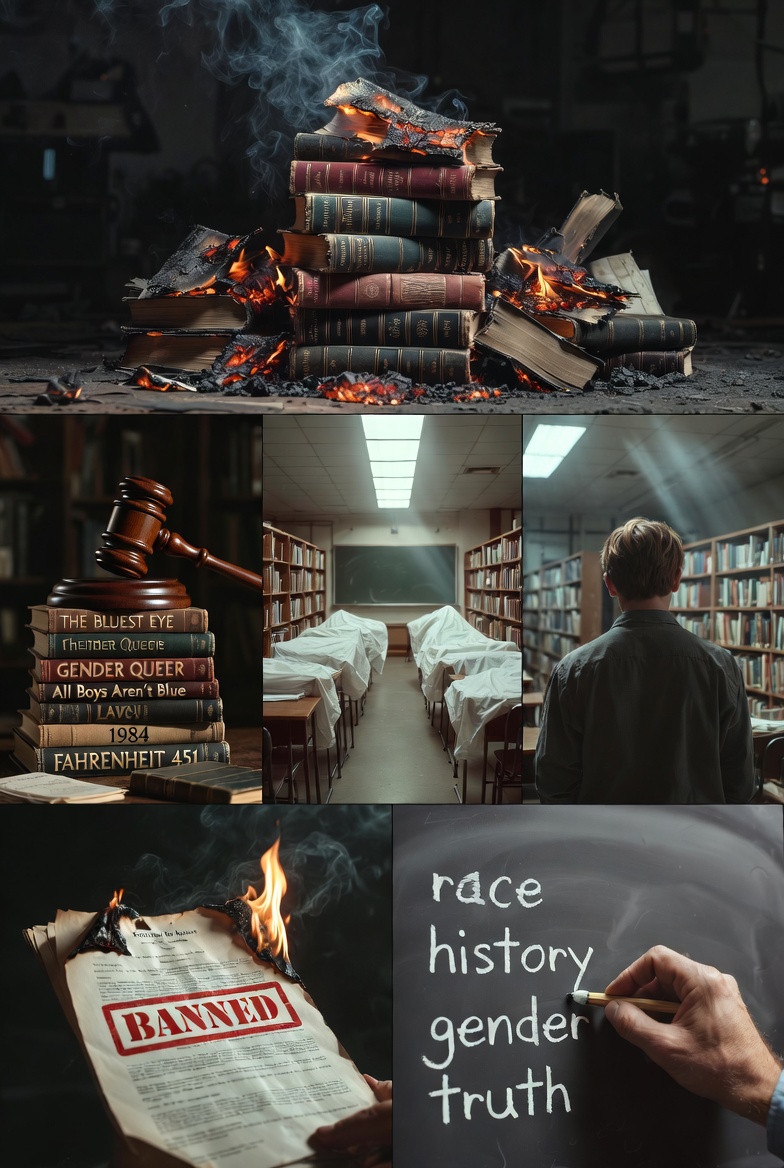

The result has been a wave of book challenges unprecedented in modern American history. Titles dealing with race, racism, and LGBTQ+ experiences have been targeted most frequently. According to the American Library Association, the number of book challenges in 2025 was more than double the previous record, set just one year earlier.

The Ideological Targets

While the legislation is often framed in neutral terms—”protecting children,” “ensuring parental rights,” “preventing indoctrination”—the pattern of targets reveals a clear ideological agenda.

Race and racism education has been the primary focus. The concept of “critical race theory” has been stretched far beyond its academic meaning to encompass virtually any discussion of systemic racism in American history. Laws in multiple states prohibit teaching that the United States is “fundamentally racist” or that an individual’s race determines their moral character.

The practical effect is to sanitize American history. Discussions of slavery, Jim Crow, redlining, and ongoing discrimination become risky when any mention might be interpreted as suggesting the country remains systemically racist. Teachers report avoiding these topics entirely rather than risk complaints or legal action.

Gender and LGBTQ+ topics have emerged as a second major target. Florida’s Parental Rights in Education Act—dubbed “Don’t Say Gay” by critics—prohibited classroom discussion of sexual orientation or gender identity in certain grades. Similar laws have followed in Alabama, Arkansas, and other states.

For LGBTQ+ students, the effect has been profoundly isolating. When teachers cannot discuss their identities, when books featuring same-sex parents disappear from libraries, when the curriculum offers no reflection of their existence, the message is clear: you do not belong here. Suicide prevention experts warn that such erasure has measurable consequences for vulnerable youth.

Abortion and reproductive rights discussions have also been restricted. In states with abortion bans, teachers report uncertainty about whether they can discuss reproductive health, contraception, or even the history of abortion rights. Some districts have preemptively removed such topics from curricula to avoid legal risk.

Climate change instruction has faced challenges as well, though with less success. In states where fossil fuel industries hold political power, legislation has been introduced requiring “balanced” teaching of climate science—meaning that settled scientific consensus must be presented alongside industry-funded skepticism.

The Book Banning Epidemic

If the legislative battle has been fought in state capitols, its most visible front has been the school library. Across the country, organized campaigns have targeted hundreds of books for removal, often with remarkable success.

The numbers are staggering. According to PEN America, more than 5,000 individual book bans occurred in the 2024-2025 school year, affecting over 2,000 unique titles. This represents a tenfold increase from just three years earlier. Schools in Texas, Florida, and Missouri account for the largest share, but no region has been untouched.

The targets reveal the agenda. Of the 120 most frequently banned books, the vast majority deal with race, racism, or LGBTQ+ experiences. Toni Morrison’s “The Bluest Eye,” Maia Kobabe’s “Gender Queer,” and George M. Johnson’s “All Boys Aren’t Blue” appear repeatedly on challenge lists. Books by Black authors, LGBTQ+ authors, and authors of color are disproportionately targeted.

The organized nature of the campaigns distinguishes this moment from past book challenges. Earlier efforts were typically local, driven by individual parents concerned about particular books. Today’s challenges are often coordinated by national organizations that provide templates, legal support, and publicity. Moms for Liberty, No Left Turn in Education, and other groups have turned book challenges into a political strategy rather than a parental concern.

The chilling effect extends beyond banned books. When publishers see which titles are targeted, they become reluctant to acquire similar works. When authors see colleagues subjected to harassment, they think twice about tackling controversial topics. When teachers see librarians facing investigation, they self-censor. The visible bans are only the tip of a much larger iceberg of suppressed speech.

Federal-Level Pressure

While state legislatures have been the primary battleground, federal action has amplified and encouraged state efforts. The Trump administration’s “Restoring Freedom of Speech and Ending Federal Censorship” executive order, issued in January 2025, created a permissive environment for state-level restrictions.

Department of Education guidance has shifted dramatically. Where previous administrations encouraged diversity, equity, and inclusion initiatives, the current department has signaled that such programs may violate civil rights laws by discriminating on the basis of race. Investigations have been opened into school districts with robust DEI programs, creating pressure to abandon them voluntarily.

Military base schools have become a particular focus. The Department of Defense operates schools for children of military personnel, and these schools have seen some of the most aggressive book removal efforts. When the federal government itself removes books from its own schools, it sends a powerful signal to state and local officials.

Funding threats have been deployed strategically. The Department of Education has signaled that schools teaching “divisive concepts” risk losing federal funding. While such threats have been made before, the current administration’s willingness to follow through has created genuine fear among school administrators.

The effect has been to create a multi-level pressure system. State laws provide the legal framework. Federal action provides the enforcement mechanism. National organizations provide the political momentum. Together, they create an environment in which academic freedom is under assault from all directions.

The Chilling Effect on Education

The most significant consequences of this legislative assault are not the laws themselves but their effect on educational practice. When teachers fear investigation, when administrators worry about funding, when librarians anticipate challenges, the result is a pervasive chilling effect that extends far beyond the specific topics addressed in legislation.

Teacher self-censorship is widespread and largely invisible. Surveys conducted by the RAND Corporation and other research organizations find that a majority of teachers report avoiding certain topics, removing books from classrooms, or altering lesson plans to avoid potential controversy. This self-censorship is often invisible to parents and students, but it fundamentally changes the educational experience.

The narrowing of curriculum affects all students, not just those in states with restrictive laws. Textbook publishers, seeking the largest possible market, produce materials that avoid controversy entirely. Curriculum providers offer “safe” options that omit any discussion of race, gender, or politics. The result is a homogenized, sanitized education that prepares students poorly for engagement with a complex world.

Teacher attrition has accelerated. Experienced educators, particularly those in subjects like social studies and English that touch on controversial topics, report feeling unable to do their jobs effectively. Many are leaving the profession entirely, taking decades of expertise with them. Their replacements, younger and less experienced, are even more likely to self-censor.

Student learning suffers in ways that will take years to measure. When students cannot discuss difficult topics in structured classroom settings, they turn to unsupervised online spaces where misinformation thrives. When they encounter censorship, they learn that certain questions are forbidden—a lesson fundamentally at odds with the purposes of education in a democratic society.

The long-term consequences for democratic citizenship are profound. Students who learn that controversial topics cannot be discussed in schools learn that democracy itself is a sham. They become either disengaged from political life or susceptible to authoritarian movements that promise to restore “real” discussion outside institutional constraints.

The Organized Movement Behind the Legislation

Understanding this moment requires understanding the movement that has made it possible. The current assault on academic freedom did not emerge spontaneously; it is the product of decades of organizing, funding, and strategic development.

The network of organizations supporting these efforts is extensive and well-funded. Moms for Liberty, founded in 2021, has grown to hundreds of chapters in dozens of states. The organization provides training, resources, and political support for parents seeking to influence local school boards. Its annual summits draw national political figures and mainstream media coverage.

The Manhattan Institute, the Heritage Foundation, and other conservative think tanks have provided intellectual legitimacy and policy templates. Model legislation developed by these organizations appears in bill after bill across multiple states. Their scholars publish reports, testify before legislatures, and shape media coverage of education issues.

The funding behind these efforts is substantial and often opaque. Donor-advised funds, family foundations, and political organizations channel millions of dollars into the movement. While precise figures are difficult to obtain, estimates suggest that organizations promoting educational restrictions have budgets in the hundreds of millions annually.

The political strategy has been remarkably effective. By focusing on school boards and state legislatures—offices with low turnout and limited media attention—the movement has achieved victories disproportionate to its popular support. In many communities, a few hundred organized activists have been able to control educational policy for tens of thousands of students.

Resistance and Legal Challenges

Despite the scale of the assault, resistance has emerged. Across the country, parents, teachers, students, and civil liberties organizations are fighting back through legal challenges, political organizing, and direct action.

The ACLU and other legal organizations have filed lawsuits challenging the most restrictive laws. Claims based on First Amendment vagueness, equal protection, and due process have had some success. Federal courts have blocked provisions in Florida, Oklahoma, and other states, though these rulings are often preliminary and subject to appeal.

The Foundation for Individual Rights and Expression (FIRE) has documented academic freedom violations and provided legal support to affected educators and students. FIRE’s tracking of First Amendment cases before the Supreme Court reveals a judiciary increasingly called upon to referee political conflicts that have been reframed as legal disputes.

Student organizing has been particularly effective. In state after state, students have walked out of classes, testified before legislatures, and organized to protect their own educational opportunities. Their voices, often more compelling than adult advocacy, have shifted public opinion and influenced political outcomes.

Local school board elections have become battlegrounds. In communities across the country, progressive and moderate candidates have organized to challenge conservative school board majorities. While results are mixed, the increasing attention to these normally low-profile races suggests a growing recognition of what is at stake.

The anti-SLAPP movement has achieved significant victories in recent years, with numerous jurisdictions adopting legislation designed to deter frivolous lawsuits intended to silence critics. These laws typically provide for expedited dismissal of meritless cases and allow defendants to recover attorneys’ fees, thereby shifting the risk calculus that currently encourages the deployment of litigation as a weapon.

What Comes Next?

The trajectory of this conflict remains uncertain. Both sides have demonstrated commitment and capacity. The outcome will shape American education for a generation.

Escalation seems likely. Supporters of restrictions, emboldened by their successes, show no signs of retreat. New bills are being drafted for the next legislative session, targeting additional topics and imposing stricter requirements. The movement has learned that aggressive tactics work and has little reason to moderate.

Legal battles will continue. Many challenged laws have not yet reached final judicial resolution. The Supreme Court’s composition suggests a receptivity to some arguments for educational restriction, though the Court has historically protected First Amendment rights even in educational settings. The eventual rulings will establish boundaries that neither side can easily cross.

The cultural battle may be decisive. Laws can be changed; court rulings can be reversed. But if a generation of Americans grows up believing that education is indoctrination, that teachers cannot be trusted, and that difficult topics must be suppressed, the damage will be lasting. The cultural outcome may matter more than any legal or political victory.

International attention is growing. The American experiment in educational restriction is being watched closely by authoritarians and democrats alike. Success here would encourage similar efforts elsewhere; failure would demonstrate the resilience of democratic institutions. The stakes extend far beyond America’s borders.

The Imperative of Defense

In the final analysis, the assault on academic freedom is an assault on democracy itself. Democracies require citizens who can think critically, evaluate evidence, and engage with diverse perspectives. Educational systems that suppress controversial topics, eliminate diverse viewpoints, and punish intellectual exploration cannot produce such citizens.

The defense of academic freedom is therefore not a matter of professional concern for educators alone. It is a matter of civic survival for everyone who believes in democratic governance. When teachers cannot teach, students cannot learn what democracy requires.

The response must be comprehensive. Legal defense of challenged educators and students is essential, but it is not enough. Political organizing to elect school board members and legislators committed to academic freedom is necessary, but it too is insufficient. Cultural work to rebuild trust in educational institutions and the value of free inquiry may be the most important task of all.

The 70 bills and 22 laws of the past year are not the end of this story. They are the beginning of a conflict that will define American education for decades to come. How that conflict resolves will determine not only what students learn, but what kind of citizens they become—and what kind of democracy America remains.

Bernd Pulch is a political commentator, satirist, and investigative journalist covering lawfare, media control, and German politics. His work examines how legal systems are weaponized and what democracy loses when courts become battlefields. Full bio →

This investigation is reader-supported. Secure donations via Monero →

Tags: academic freedom, censorship higher education, book banning 2026, critical race theory bans, Don’t Say Gay laws, Florida Individual Freedom Act, Moms for Liberty, PEN America book bans, school library censorship, teacher self-censorship, curriculum restrictions, parental rights legislation, First Amendment schools, DEI bans, culture war education, textbook censorship, student organizing, ACLU education lawsuits, FIRE academic freedom, educational gag orders

/cdn.vox-cdn.com/uploads/chorus_image/image/61436539/stasi_1020.1419979914.0.jpg)

You must be logged in to post a comment.